Exposed AI Endpoints

Your AI Infrastructure is the New Attack Surface You are likely standing on a digital landmine that you laid yourself. While most organizations obsess over “prompt injection” or AI hallucinations, savvy attackers target the unsecured infrastructure and endpoints that connect your Large Language Models (LLMs) to your internal data. Recent reports from The Hacker News […]

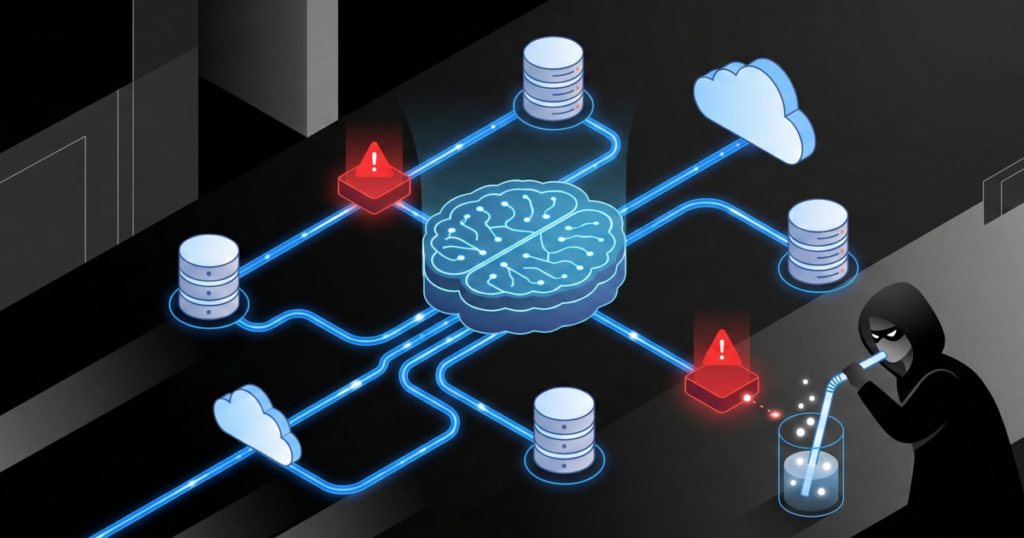

AI Data Poisoning: The Hidden Threat to LLM Integrity

Small Datasets Can Hijack Your AI Attackers do not need a mountain of lies to brainwash your AI; they only need a tiny drop of “poison.” This vulnerability allows a malicious actor to turn your company’s smartest tool into a sleeper agent that waits for a specific keyword to start sabotaging your operations. If you […]